The history of petroleum production since the nineteenth century is largely characterized by the technology that was used to extract it from the Earth. Throughout this history, there have been many paradigm shifts in which a new technological regime dramatically improved the efficiency of petroleum extraction, transport, refining, and use. One of the most recent of these paradigm shifts is the development of rotary steerable systems (RSS), which allow drilling operators to control the direction of the drill bit while the drill is still in operation, hence, while the drill is under load and underground. Though the task seems rudimentary, the technology required to achieve this degree of remote control is incredibly complex – among some of the most advanced technology that mankind has developed – including a highly sophisticated system of electronics that must operate reliably miles underground in some of the harshest conditions imaginable. These developments have been a critical component of the shale revolution, and is one of the only reasons that the modern fracking industry exists. We will explore what advances made these systems possible, and why a task as simple as drilling sideways isn’t as easy as it seems.

EARLY DEVELOPMENTS IN DIRECTIONAL DRILLING

The first engine-drilled commercial oil wells were established in the 1850s and 1860s in Canada and the United States, but it took many decades before producers became conscious of the utility of drilling wells at an angle that was not necessarily vertical.[i] The first widely known instance of a drilling rig intentionally drilling at an angle to achieve a specific objective occurred in 1934, when John Eastman and Roman Hines were able to drill slanted relief wells into an oilfield to reduce the pressure; an instrumental feat in reining in a well which had blown out. Eastman and Hines were featured in an issue Popular Science in May of that same year, where the magazine details Eastman’s primitive but effective surveying tool, “Into the hole went a single-shot surveying instrument of Eastman’s own inventions. As it hit bottom, a miniature camera within the instrument clicked, photographing the position of compass needle and a spirit-level bubble.” [ii] Indeed, the ability to “survey” and track the location, inclination (the difference in well angle from vertical), and azimuth (the compass direction) of the drill head is just as critical as having the hardware to drill in a given direction. Because micro-electronics had not yet been invented, early efforts relied on northing more than crude instrumentation such as Eastman’s camera.

The first piece of technology that truly laid the foundation for directional drilling was the development of what is today known as a “mud motor,” which is a type of progressive cavity positive displacement pump (PCPD) that is generally located immediately behind the drill bit in the drill string. Obviously the mud motors of today look vastly different from early versions, but they can trace their lineage all the way back to two patents, the first of which is entitled Drills for Boring Artesian Wells, filed by C. G. Cross in 1873, and the second of which is entitled Machine for Operating Drills, filed by M. C. and C. E. Baker in 1884.

The first piece of technology enabling some measure of directional drilling

Source: Cross, C. G. Drills for Boring Artesian Wells. United States of America,

Patent 142992. 23 September 1873.

The issue that both of these designs were attempting to address was the collapse or breakage of the drill string in rotary and reciprocating drilling operations, respectively. Because early steel was of such poor quality, rotating the entire drill string assembly from top to bottom induced these problems. Shallow wells could be drilled without issue, but the torsional stresses on the drill string only increased as the well got deeper due to the increasing mass of the string and the torsional flex that was inherent to the pipe or rods being used. Both Cross and the Bakers developed mechanisms in which the drill string could remain stationary while the drill bit turned, by pressurizing the drill string with fluids such as water, steam, or air, then using this pressure to drive a very rudimentary motor, i.e. the drill bit.[iii] [iv] By solving this problem, drill operators naturally discovered that they could use a section of slightly curved pipe in the drill string to influence the direction the drill, so long as the string remained stationary while the bit turned. They could install one length of curved pipe, lower the assembly to the “kickoff point” (the point in which a well begins to deviate from vertical), drill until they had reached their desired change in well inclination and/or azimuth, then raise the assembly and swap the curved pipe back to straight.

As earlier mentioned, the ability to track the location of the drill head is of the utmost importance in trying to steer a drill into a pocket of oil or gas. Early efforts in “measurement while drilling” (MWD) consisted of little more than pendulums to determine the inclination of the well, and compasses to determine the azimuth. However, pendulums were ineffective for deeper wells, and compasses often had issues when used inside of well casing as the steel interfered with the Earth’s magnetic field. This ushered in the era of micro-gyroscopes in the early 20th century, which are still used to this day.

THE TECHNOLOGY

The difference between RSS and conventional systems begins at the kick-off point in the well. The systems used to steer the bit vary significantly among manufacturers, but there are two primary platforms that all manufacturers have built their systems upon: “push-the-bit” and “point-the-bit” designs. Push-the-bit systems rely on a small array of pads around the circumference of a sleeve located just behind the bit. This system uses internal hydraulic pressure to selectively actuate the pads outward to push against the side wall of the well; applying pressure to one side causes the bit to curve in the direction opposite of the pad(s) being utilized. By doing this, the tool is able to influence the direction of the bit, steering it while in operation.

A “push-the-bit” system. The pads are actuated in order to “push” the bit in the opposite direction.

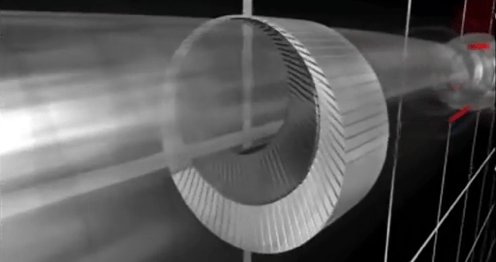

Point-the-bit systems work in a very different manner. Their ability to steer the bit is achieved by using a variety of components to push on the drive shaft, creating a deflection in the bit by way of a fulcrum between the control unit and the bit. Companies have developed very unique and clever ways to create this bit deflection. For example, the company Weatherford uses a radial array of sixty-six electronically triggered and hydraulically actuated pistons to flex the shaft in the desired direction.[v] Halliburton uses a pair of nested eccentric rings through which the drive shaft turns. These rings are independently rotated to create the desired bit deflection.[vi]

Weatherford’s “point-the-bit” RSS

Halliburton’s “point-the-bit” RSS

Common to both of these systems is the need to have the control unit remain stationary or “upright,” whether the drill string is rotating or not, else, it would be impossible for the control systems to have any frame of reference from which to command the direction of travel. Many systems use blade-like devices mounted radially on the outside of the control unit, much like the aforementioned pads in push-the-bit systems, also akin to the fletching of an arrow. These devices, coupled with high-performance bearings on both the front and the rear of the control unit, create enough friction between themselves and the sidewalls of the well to prevent rotation of the tool. This is essential to maintain full control of the well’s direction.

Measurement while drilling methods have also advanced substantially from the early days of directional drilling. No longer is it necessary to use pendulums or down-hole cameras to determine the progression of the well. The first major advancement came with the development of what is called “mud pulse telemetry,” which is not in and of itself a tool to pinpoint the location of the drill head, but instead a method of data transmission. Because these drills, and by extension the entire drill string, are continuously pressurized with drilling fluid also known as “mud,” a clever innovator discovered that one could take this baseline pressure and modulate it over time. To create this variance in pressure, a system of electronics rapidly actuates a valve on the drilling platform to send data to the drill underground, and actuate a valve in the drill to send data back to the platform. These variances in mud pressure are essentially encoding the drilling fluid with information about the location, depth, inclination, and azimuth of the drill. Pressure differences are received as analog signals (which are then modulated into digital data) in real time by both a device coupled to the drill string on the rig, as well as a device within the control unit adjacent to the drilling head underground. Mud pulse telemetry is the most commonly used method of data transmission in drilling operations, as it is highly reliable and usually fast enough for most wells. Though current technology can reach bandwidth speeds of up to 40 bits/s, significant signal attenuation occurs with more depth, and these systems often transmit data at speeds far less than 10 bits/s. For highly irregular wells at great depth, low bandwidth can create a serious amount of downtime for the rig while crews wait for information to be exchanged, thus other methods may be employed.[vii]

Electromagnetic (EM) telemetry has emerged more recently as a system far superior to mud pulse telemetry in certain situations. EM systems utilize a voltage difference between the drill string and a ground rod driven into the Earth some distance away from the well. Though this system can transmit data much faster than mud pulse telemetry for shallow wells and wells that are drilled using air as opposed to a liquid, the electromagnetic signal attenuates very rapidly with well depth. Within the last decade, some companies have also developed drilling pipe that incorporates a wire into the pipe wall. This wire is connected from stick to stick of pipe, and can offer data transfer speeds orders of magnitude faster than either of the previously mentioned methods; over 1,000,000 bits/s. Using a system like this requires drill operators to be much more attentive to the process of building the drill string, ensuring that each connection is resilient enough to withstand the harsh environment it will operate in down-hole. This is the future of MWD, but until this technology becomes common among drillers, manufacturers of these components will be unable to realize the economies of scale that come with mass production, and that ultimately make these components cheaper and more reliable to use.[viii]

This collection of technologies has enabled drilling operators to reach distances that their predecessors likely couldn’t even comprehend. It is not uncommon for a horizontal drilling operation to achieve lateral distances of over one mile, though some operations have done much more. In 2016, Halliburton and Eclipse Resources drilled the longest horizontal well in the U.S., exceeding 18,500 feet drilled horizontally.[ix] This still doesn’t come close to beating the record set by Maersk Oil in 2008, when they completed an offshore well in Qatar that had a horizontal section of 35,750 feet in length. Highlighting the precision of this technology, all seven miles of this Qatari well were drilled through a reservoir target only twenty feet in thickness under the sea floor.[x]

BENEFITS AND ROLE IN THE SHALE REVOLUTION

It is only with highly advanced rotary steerable systems and measurement while drilling methods that operators are able to achieve these feats of nature. Directional drilling offers several fundamental advantages over conventional drilling techniques, the first and perhaps most obvious of which is the ability to drill more wells from the same platform or rig. Whereas in the past drillers had to construct many wellheads in close proximity to each other to increase the rate of resource extraction from a single reservoir, directional drilling allows operators to use a single wellhead to access dozens of different wells. This has had a monumental effect on increasing the efficiency of resource recovery operations, introducing an economies of scale into the process that dramatically reduces the cost of infrastructure and reduces the amount of time labor spends shifting from location to location. This has the effect of permanently increasing the productivity of assets under management by several fold.

Directional drilling also eliminates topographical constraints with respect to where the drilling rig and wellhead are located. This means that drillers are no longer forced to haul materials off-road, over mountains, or through rivers to locate the rig above a prospective reservoir. Far fewer roads and bridges have to be constructed to make the rig regularly accessible. One can imagine a multitude of circumstances in which it could be immensely troublesome to clear forests, fill in streams, or level the side of a mountain just to create a good working surface for drillers, but with these systems, drillers can locate the wellhead wherever it is easiest. In this way, this method of resource extraction drastically reduces the amount of environmental destruction that occurs in this industry. Additionally, resources located under small bodies of water can be extracted from shore, and resources located under cities or populated areas can be safely extracted from a safe distance.

In comparison to the directional drilling technology of the mid twentieth century, rotary steerable systems eliminate the downtime drillers were previously subjected to when they had only curved pieces of pipe to use in order to influence the well direction. Drillers pulled the entire drill string out of the well to insert a single piece of curved pipe in order to drill a dog-leg angle, but would then have to pull the drill string out again to convert the drill back to its previous state to continue in a straight line once they had reached the desired inclination and/or azimuth. Disassembling the drill string is a time-consuming process, thus, being able to control the drill while in operation eliminated all of this downtime, shaving days or weeks off of the project. This further reduces operational costs to the company, and by extension, oil and gasoline prices to consumers.

Directional drilling has also been a critical component to the growth in market share of shale oil and gas, and had this technology not been developed, the shale revolution would have not been possible. Conventional fossil fuel resources have historically been extracted from homogeneous formations whereby companies only needed to pierce the formation and let the immense pressure deliver the resource to the surface. With respect to shale-derived gas, horizontal drilling is necessary as the resource exists trapped within a non-fluid substrate; thus, one must impart a destructive force on the substrate itself to allow the resource to flow. This means that the development of a well requires drillers to have a presence in as much of the formation as possible to maximize the total volumetric space that they can fracture. Due to way in which fossil fuels were formed, reservoirs are typically very thin relative to the overall area that they occupy, which means that drillers must be able to steer their tools horizontally so as to maximize the surface area of the well that is in contact with the resource. In practice, this means that gas extraction from shale involves a great deal more horizontal drilling than vertical. Moreover, individual shale formations can rise and fall with the topography of the landscape they exist under, necessitating precision guidance of the drill to stay within the confines of the formation. In short, extracting shale resources would not be economically feasible without directional drilling.

CONCLUSION

As one can see, modern directional drilling and RSS technology required nearly two centuries of incremental innovation to make the technology what it is today. In many ways, this technology is a testament to the persistence of mankind to improve the efficiency of business operations, never relenting in their pursuit to reduce costs and maximize profits. Innovators will stop at nothing to create new ways to more efficiently extract, transport, refine, and use our natural resources, making the resulting commodities cheaper and more accessible to the disadvantaged communities of the world. Directional drilling has unlocked reservoirs of fossil fuels that only a decade ago were thought to be far too costly to extract, but this calculus has undergone a complete transformation given the advanced systems available today. Given how formative rotary steerable systems were for the past two decades of oil and gas extraction, one can only imagine what is in store for the industry in the future.

ENDNOTES

[i] Oil Museum of Canada. Oil Springs. 21 November 2015. 10 February 2017. <http://www.lclmg.org/lclmg/Museums/OilMuseumofCanada/BlackGold2/OilHeritage/OilSprings/tabid/208/Default.aspx>.

[ii] American Oil & Gas Historical Society. Technology and the Conroe Crater. 2017. 9 February 2017. <http://aoghs.org/technology/directional-drilling/>.

[iii] Baker, M. C. and C. E. Baker. Machine for Operating Drills. United States of America: Patent 292888. 5 February 1884.

[iv] Cross, C. G. Drills for Boring Artesian Wells. United States of America: Patent 142992. 23 September 1873.

[v] Weatherford. Revolution High-Dogleg. 2017. 19 February 2017. <http://www.weatherford.com/en/products-services/drilling-formation-evaluation/drilling-services/rotary-steerable-systems/revolution-high-dogleg>.

[vi] Halliburton. SOLAR Geo-Pilot XL Rotary Steerable System. 2017. 7 February 2017. <http://www.halliburton.com/en-US/ps/sperry/drilling/directional-drilling/rotary-steerables/solar-geo-pilot-xl-rotary-steerable-system.page>.

[vii] Wassermann, Ingolf, et al. “Mud-pulse telemetry sees step-change improvement with oscillating shear valves.” Oil and Gas Journal 106.24 (2008)

[viii] National Oilwell Varco. IntelliServ. 2017. 11 February 2017. <http://www.nov.com/Segments/Wellbore_Technologies/IntelliServ/IntelliServ.aspx>.

[ix] World Oil. Halliburton, Eclipse Resources complete longest lateral well in U.S. 31 May 2016. 8 February 2017. <http://www.worldoil.com/news/2016/5/31/halliburton-eclipse-resources-complete-longest-lateral-well-in-us>.

[x] Gulf Times. Maersk drills longest well at Al Shaheen. 21 May 2008. 14 February 2017. <http://www.gulftimes.com/site/topics/article.asp?cu_no=2&item_no=219715&version=1&template_id=48&parent_id=28>.